Introduction

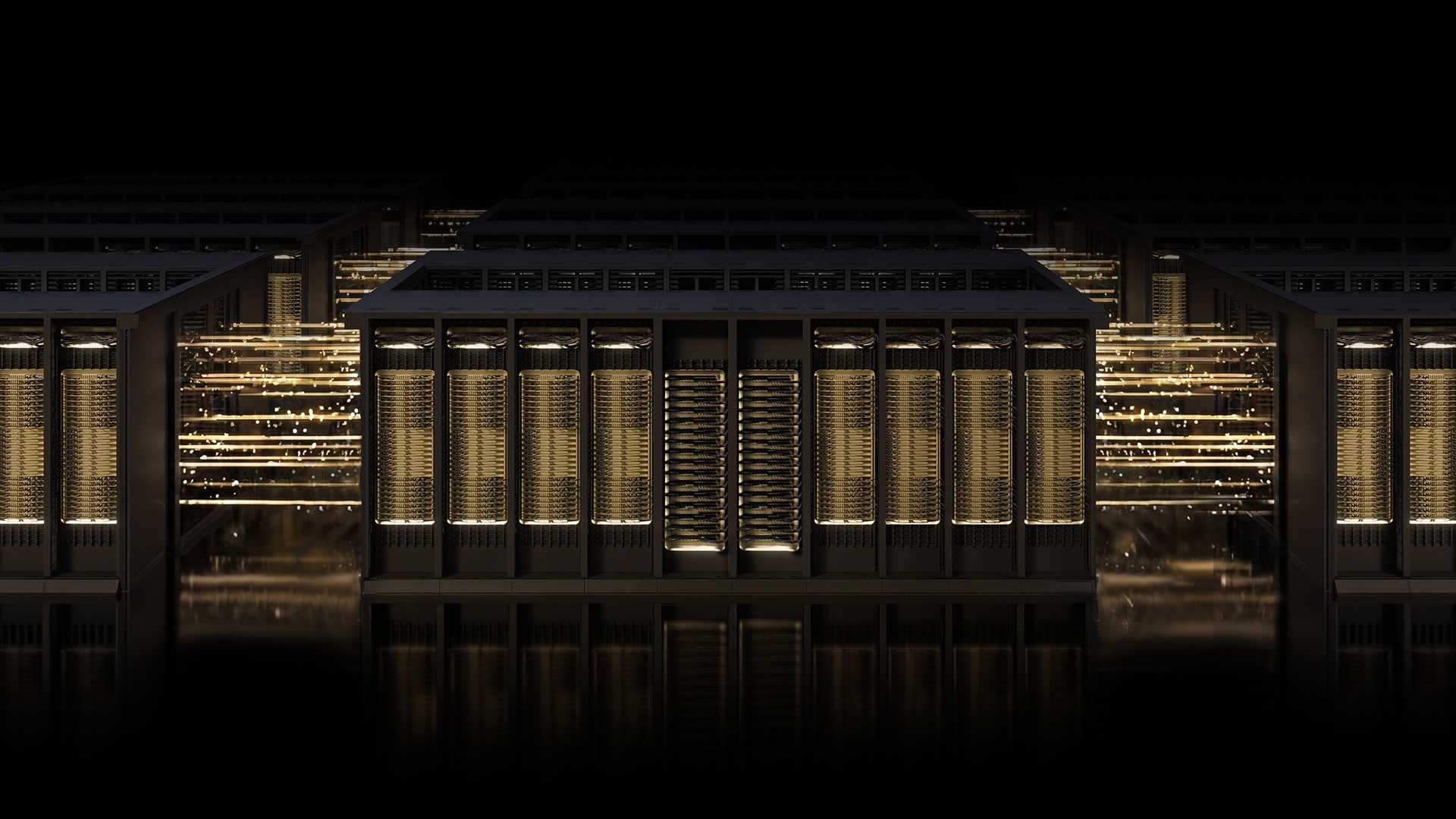

The race to build the world's most powerful AI factories is accelerating, and the networking infrastructure must keep pace with the ambitions of artificial intelligence itself. NVIDIA's Spectrum-X Ethernet scale-out infrastructure has emerged as the most advanced AI networking technology available today, deployed by industry leaders who demand uncompromising performance, resilience, and scale. With the introduction of Multipath Reliable Connection (MRC), this open, AI-native Ethernet fabric is setting a new standard for gigascale AI deployments.

The Challenge of AI Networking at Scale

Training large-scale AI models requires massive data throughput and minimal latency. Traditional networking approaches often lead to congestion, packet loss, and inefficient GPU utilization, causing costly idle time in long-running training runs. As AI models grow to billions of parameters, the network must act as a seamless, high-speed backbone that can handle the intense data demands of distributed computing.

NVIDIA's Spectrum-X addresses this by combining purpose-built hardware, deep telemetry, and intelligent fabric control. The latest advancement is MRC, an RDMA transport protocol that revolutionizes how data flows across AI clusters.

What Is MRC? A Smarter Way to Move Data

Multipath Reliable Connection (MRC) enables a single RDMA connection to distribute traffic across multiple network paths simultaneously. Think of it as replacing a single-lane road spanning a town with a cleverly laid-out street grid system paired with an on-the-fly traffic app, allowing drivers to reroute around slowdowns and road closures. This architectural shift dramatically improves throughput, load balancing, and availability for AI training fabrics.

MRC was developed collaboratively by NVIDIA, Microsoft, and OpenAI, and has already proven its value in production environments. As Sachin Katti, head of industrial compute at OpenAI, noted: "Deploying MRC in the Blackwell generation was very successful and was made possible by a strong collaboration with NVIDIA. MRC's end-to-end approach enabled us to avoid much of the typical network-related slowdowns and interruptions and maintain the efficiency of frontier training runs at scale."

How MRC Works Under the Hood

MRC delivers high levels of GPU utilization by load-balancing traffic across all available paths, ensuring every GPU gets the bandwidth it needs throughout a training run. It sustains high bandwidth even under congestion by dynamically avoiding overloaded paths in real time. When data loss occurs, intelligent retransmission enables rapid, precise recovery, minimizing the impact of short-lived interruptions on long-running jobs—helping avoid GPU idle time.

Administrators also gain fine-grained visibility and control over traffic paths, simplifying operations and accelerating troubleshooting. This level of network intelligence is critical for maintaining the efficiency of frontier AI training.

Real-World Deployments: Powering the Largest AI Factories

MRC is already in production at some of the world's largest AI supercomputing centers. Microsoft's Fairwater and Oracle Cloud Infrastructure's (OCI) Abilene data centers—two of the largest AI factories purpose-built for training and deploying leading-edge frontier LLMs—rely on MRC to deliver on performance, scale, and efficiency requirements. NVIDIA Spectrum-X Ethernet provides the network foundation needed to run these massive AI models with confidence.

These deployments demonstrate the power of the Spectrum-X Ethernet platform: purpose-built hardware, deep telemetry, and intelligent fabric control working together to take a new protocol from concept to gigascale AI production.

Open Collaboration: From Proprietary to Community Standard

Proven first in production with performance optimized on NVIDIA Spectrum-X Ethernet hardware, MRC has now been released as an open specification through the Open Compute Project (OCP). This move underscores NVIDIA's commitment to an open, AI-native ecosystem. By making MRC an industry standard, NVIDIA and its partners enable broader adoption and innovation across the AI networking landscape.

The result is a networking fabric that is not only powerful but also open and interoperable, allowing more organizations to benefit from gigascale AI capabilities.

Conclusion: A New Standard for AI Infrastructure

As AI continues to push the boundaries of what's possible, the underlying network must keep pace. NVIDIA Spectrum-X with MRC represents a leap forward in networking technology, solving the fundamental challenges of congestion, load balancing, and reliability at scale. With proven success at OpenAI, Microsoft, and Oracle, and now an open specification, MRC is setting the standard for the next generation of AI factories.

For organizations building or scaling their AI infrastructure, adopting an open, AI-native Ethernet fabric like Spectrum-X with MRC is becoming a strategic imperative to achieve maximum GPU utilization and training efficiency.