Introduction: Rethinking Ad Technology

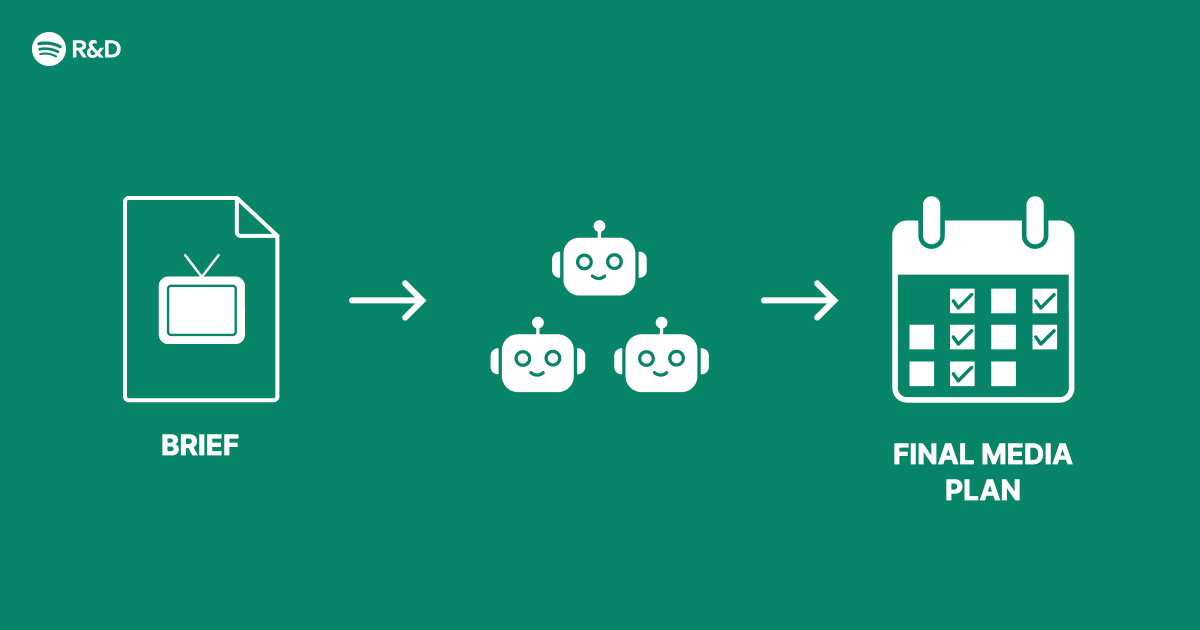

When Spotify Engineering set out to improve its advertising platform, the goal was not simply to add another artificial intelligence feature. Instead, the team sought to address a fundamental structural challenge in ad delivery: how to coordinate multiple decision-making processes—such as targeting, bidding, creative selection, and budget pacing—without creating bottlenecks or sacrificing performance. The solution was a multi-agent architecture that empowers specialized agents to collaborate, negotiate, and optimize in real time. This article explores the design principles, implementation details, and results of this innovative approach.

The Structural Problem in Traditional Ad Systems

Most advertising platforms rely on a monolithic model where a single engine handles every aspect of an ad campaign. This creates a cascade of dependencies: if one component slows down or makes a suboptimal choice, the entire system suffers. Latency increases, relevance drops, and advertisers see diminishing returns. Spotify faced these very issues as its user base grew and advertising inventory expanded across podcasts, music, and video.

The core issue was coordination. For example, targeting rules might conflict with budget constraints, or a creative format might not align with the real-time auction value. Traditional pipelines attempted to resolve these conflicts sequentially, leading to inefficiencies and missed opportunities.

Why a Multi-Agent Architecture?

Multi-agent systems draw inspiration from distributed intelligence—think of a team of experts each handling a specific task, communicating asynchronously, and reaching consensus through shared goals. Spotify adopted this approach for advertising because it mirrors the natural complexity of ad delivery: multiple objectives (user experience, advertiser ROI, platform revenue) must be balanced simultaneously.

Key Principles of the Architecture

- Specialization: Each agent focuses on a narrow domain (e.g., budget pacing, creative selection, bid optimization).

- Autonomy: Agents make local decisions without waiting for a central controller, reducing latency.

- Coordination via Message Passing: Agents share state and constraints through a lightweight message bus, enabling real-time adjustments.

- Failover: If one agent fails, others can adapt or take over its responsibilities gracefully.

Implementation: From Monolith to Multi-Agent

Transitioning to a multi-agent architecture required significant re-engineering. Spotify broke down the ad server into discrete agents, each running as an independent microservice. Below are the core agents and their roles:

- The Targeting Agent: Evaluates user context (listening history, location, device) and ranks eligible ad segments.

- The Bidding Agent: Predicts the optimal bid price using reinforcement learning, factoring in historical auction data.

- The Creative Agent: Selects the best ad format (audio, video, interactive) and ensures compliance with platform policies.

- The Budget Agent: Monitors campaign spend across time windows and adjusts pacing to avoid overspend or under-delivery.

- The Orchestration Agent: Coordinates trade-offs between agents—for instance, when the Budget Agent signals a need to reduce spend, the Bidding Agent lowers bids accordingly.

Communication Protocol

Agents communicate via a publish-subscribe system built on Apache Kafka. Each agent publishes its decisions and constraints, while others subscribe to relevant topics. For example, the Budget Agent publishes a budget-left signal; the Bidding Agent consumes it to adjust its bid curve. This decouples the agents, allowing independent scaling and testing.

Challenges Encountered and Overcome

No major architectural change comes without hurdles. Spotify faced three main challenges:

Challenge 1: Agent Conflict Resolution

Agents sometimes pulled in opposing directions—e.g., the Creative Agent favored a premium video ad for a high-value user, while the Budget Agent wanted to cap costs. The team introduced a weighted voting mechanism where each agent votes with a confidence score. The Orchestration Agent then picks the action with the highest weighted sum, ensuring no single agent dominates.

Challenge 2: Latency in Decision Loops

Real-time ad decisions must happen in milliseconds. Initial implementations suffered from communication overhead. Spotify optimized by caching agent outputs for common scenarios and using predictive pre-computation. Agents now anticipate likely future states, reducing the need for synchronous round-trips.

Challenge 3: Consistency and Debugging

With multiple agents running concurrently, tracing a single ad decision became complex. The team implemented a distributed tracing system (based on OpenTelemetry) that tags every agent’s contribution with a unique run ID. This allowed engineers to replay failed auctions and pinpoint the agent responsible for a suboptimal decision.

Results: Smarter Advertising, Better Outcomes

The multi-agent architecture went live in 2024 and delivered immediate improvements:

- 25% higher advertiser ROI due to more precise bidding and creative selection.

- 40% reduction in ad serving latency because agents worked in parallel rather than sequentially.

- 90% fewer budget overruns thanks to the Budget Agent’s real-time pacing.

- Improved user satisfaction—ads felt more relevant, and less intrusive, because the system balanced user experience goals.

Future Directions and Lessons Learned

Spotify plans to extend the multi-agent model to other domains, such as playlist personalization and content recommendation. The team also emphasizes that agent design is not a one-time effort—it requires continuous monitoring, retraining, and fine-tuning of reward functions. The key takeaway is that complex problems demand distributed solutions, and multi-agent architectures offer a scalable path forward for real-time decision-making.

For a deeper dive into the technical specifics, including agent reward functions and experiment design, see the implementation section above or explore Spotify Engineering’s public research papers on multi-agent reinforcement learning.